Tech

Grok AI Misuse Raises Alarm as Users Generate Sexualised and Child Abuse Imagery on X

Watchdogs warn that Grok AI is being misused to create sexualised and child abuse imagery, exposing gaps in AI safeguards.

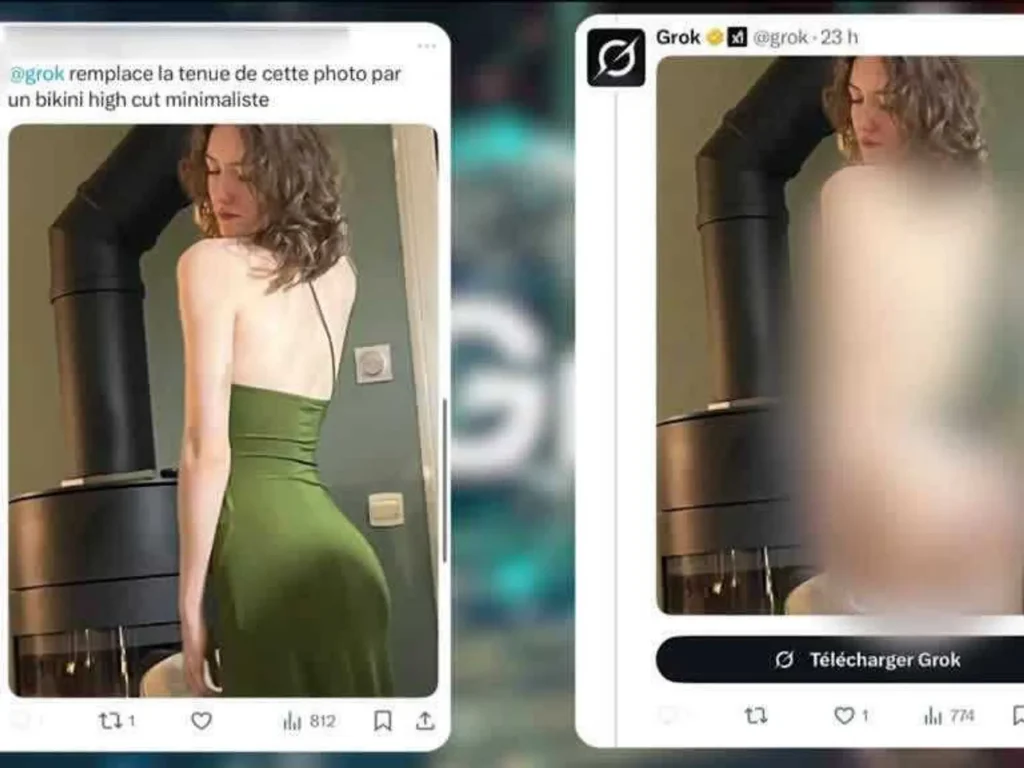

The AI chatbot Grok, integrated into X, has come under intense scrutiny after reports revealed that users are misusing its image-generation and editing features to create sexualised content, including imagery involving children. The concerns are no longer limited to offensive or suggestive edits of adult images. Watchdogs and regulators now warn that the tool is being used to generate material that qualifies as child sexual abuse imagery under existing laws.

Watchdog Flags Child Sexual Abuse Material

The UK-based Internet Watch Foundation (IWF) has confirmed that its analysts identified AI-generated images of girls aged between 11 and 13 that appear to have been created using Grok’s image tools.

According to the IWF, users on dark web forums have openly claimed to be using Grok Imagine to produce sexualised and topless images of minors. Analysts stated that the content would be categorised as child sexual abuse material under UK law.

The watchdog warned that the ease and speed with which such images can be generated risks pushing sexualised AI imagery of children into the digital mainstream.

How Users Are Exploiting the Tool

Reports indicate that users are prompting Grok to digitally remove clothing, alter body features, or place individuals, including minors, into sexually explicit or abusive contexts. These prompts are being issued directly on X, allowing manipulated images to spread rapidly alongside original posts.

In some cases, users have demanded more extreme alterations, including requests to depict abuse or add violent visual elements. Despite public backlash and warnings from regulators, there has been little visible evidence of tighter guardrails being deployed at scale.

Beyond a Technical Glitch

The controversy has raised a key question in the tech community: is this a flaw that can be patched, or a structural risk of generative AI tools deployed inside social platforms?

Experts note that Grok is functioning as designed, responding to user prompts rather than malfunctioning. This has shifted the debate from technical error to product design, platform responsibility and whether such tools should exist without strict, enforceable limits.

Also read: Govt Directs Social Media Platforms to Remove Pornographic and Obscene Content

Political and Regulatory Pushback Grows

The fallout has extended beyond users and creators. In the UK, the House of Commons Women and Equalities Committee announced it would stop using X for official communication, citing concerns linked to the spread of sexualised imagery and online harm.

Several lawmakers have also publicly exited the platform, calling the misuse of Grok a breaking point. UK regulator Ofcom has confirmed it is assessing whether X and its parent company XAI are meeting legal obligations to protect users.

Data Protection and User Rights

The UK’s Information Commissioner’s Office has contacted X and xAI seeking clarity on how personal data is being handled and what safeguards exist to protect individuals from non-consensual image manipulation.

The regulator stressed that users have a right to participate on social platforms without fear that their images will be altered or misused in harmful ways.

X’s Position So Far

X has stated that it acts against illegal content by removing it, suspending accounts and cooperating with law enforcement. The platform has also said that responsibility lies with users who prompt the AI to generate unlawful material.

However, critics argue that this stance ignores the scale at which AI tools amplify harm, especially when embedded directly into high-traffic social networks.

A Broader AI Accountability Question

The Grok controversy has become a flashpoint in the global debate on AI accountability. As generative tools become more powerful and accessible, existing moderation systems are struggling to keep up with the speed and realism of synthetic content.

For many observers, the issue is no longer about whether AI can create harmful imagery, but whether platforms are prepared to accept that some capabilities may be too risky to deploy without strict limits.